Synthetic Intelligence has reached a degree the place fashions are rising larger, higher, and extra highly effective. However the problem is: how do you run large fashions like GPT-4 or LLaMA 3 with 100 billion parameters on common units, with out spending hundreds on costly GPUs?

That’s the place Microsoft’s new open-source framework, bitnet.cpp, is available in. It breaks the barrier by permitting giant language fashions (LLMs) to run effectively on CPUs, utilizing a intelligent approach known as 1-bit quantization. You now not want a knowledge middle or a high-end GPU to expertise state-of-the-art AI efficiency.

Let’s discover how bitnet.cpp works, what it provides, and the way you should utilize it to run highly effective AI in your private system.

What’s bitnet.cpp?

bitnet.cpp is an open-source framework developed by Microsoft that makes it attainable to run giant language fashions—as much as 100 billion parameters—on customary CPU {hardware}. Whether or not you’ve an Apple M2 chip or an everyday Intel CPU, bitnet.cpp helps you deploy large fashions regionally with out the standard excessive prices.

It does this through the use of 1-bit quantization, a technique that compresses mannequin information and permits it to run sooner and extra effectively. This fashion, giant fashions turn into a lot lighter and simpler to deal with, even on shopper units.

Why This Issues

Historically, working giant AI fashions like GPT-3 or GPT-4 wanted highly effective GPUs or TPUs. These are costly and never accessible to everybody. Researchers, builders, and startups with out giant budgets usually discovered it exhausting to experiment with or deploy giant LLMs.

With bitnet.cpp, issues are altering. Now, you may:

-

Run large fashions on a laptop computer or desktop CPU

-

Lower your expenses on cloud computing or GPU leases

-

Hold information non-public with native execution

-

Develop and check AI purposes with out {hardware} constraints

This opens the door for extra innovation, training, and experimentation within the AI discipline.

Key Options of bitnet.cpp

1. Run Massive Fashions With out GPUs

bitnet.cpp eliminates the necessity for devoted GPUs. You’ll be able to run highly effective LLMs on common CPUs, that are extra extensively out there. This lowers the entry barrier for AI improvement and makes the tech extra inclusive.

Think about with the ability to check and run GPT-scale fashions on a MacBook or Intel-based workstation. That’s now attainable because of this framework.

2. 1-Bit Quantization

That is the key sauce behind bitnet.cpp. Usually, AI fashions use 32-bit floating-point numbers to signify their weights. Bitnet.cpp compresses these weights down to only 1 bit, drastically lowering reminiscence utilization and rushing up computation.

Right here’s what meaning:

-

Much less RAM is required to run the mannequin

-

Decrease bandwidth necessities

-

A lot sooner inference (mannequin responses)

-

Minimal drop in mannequin accuracy

Regardless of the intense compression, the inference high quality stays nearly the identical. You continue to get correct and helpful outputs.

3. Multi-Platform Assist

Whether or not you employ an ARM-based chip (like Apple’s M2) or an x86 CPU (like Intel or AMD), bitnet.cpp runs easily. It’s optimized for various architectures, so that you don’t want to fret about {hardware} compatibility.

This makes it superb for each Mac and Home windows customers, in addition to builders engaged on embedded or edge units.

4. Excessive Pace and Low Vitality Use

Assessments present that bitnet.cpp is considerably sooner and extra energy-efficient than older frameworks like llama.cpp. In some circumstances, it delivers:

For instance:

-

A 13B mannequin that runs at 1.78 tokens/second on llama.cpp can hit 10.99 tokens/second on bitnet.cpp.

-

On Apple M2 Extremely, power utilization drops by as much as 70%.

-

On Intel i7-13700H, energy financial savings go as much as 82.2%.

That’s a game-changer, particularly for battery-powered units or large-scale deployments.

5. Large Reminiscence Financial savings

Massive fashions like GPT or BERT often require lots of of GBs of reminiscence of their full-precision type. However with 1-bit quantization, bitnet.cpp shrinks them dramatically.

This enables these fashions to run on machines with a lot much less RAM—making them usable on laptops, desktops, and even some edge units.

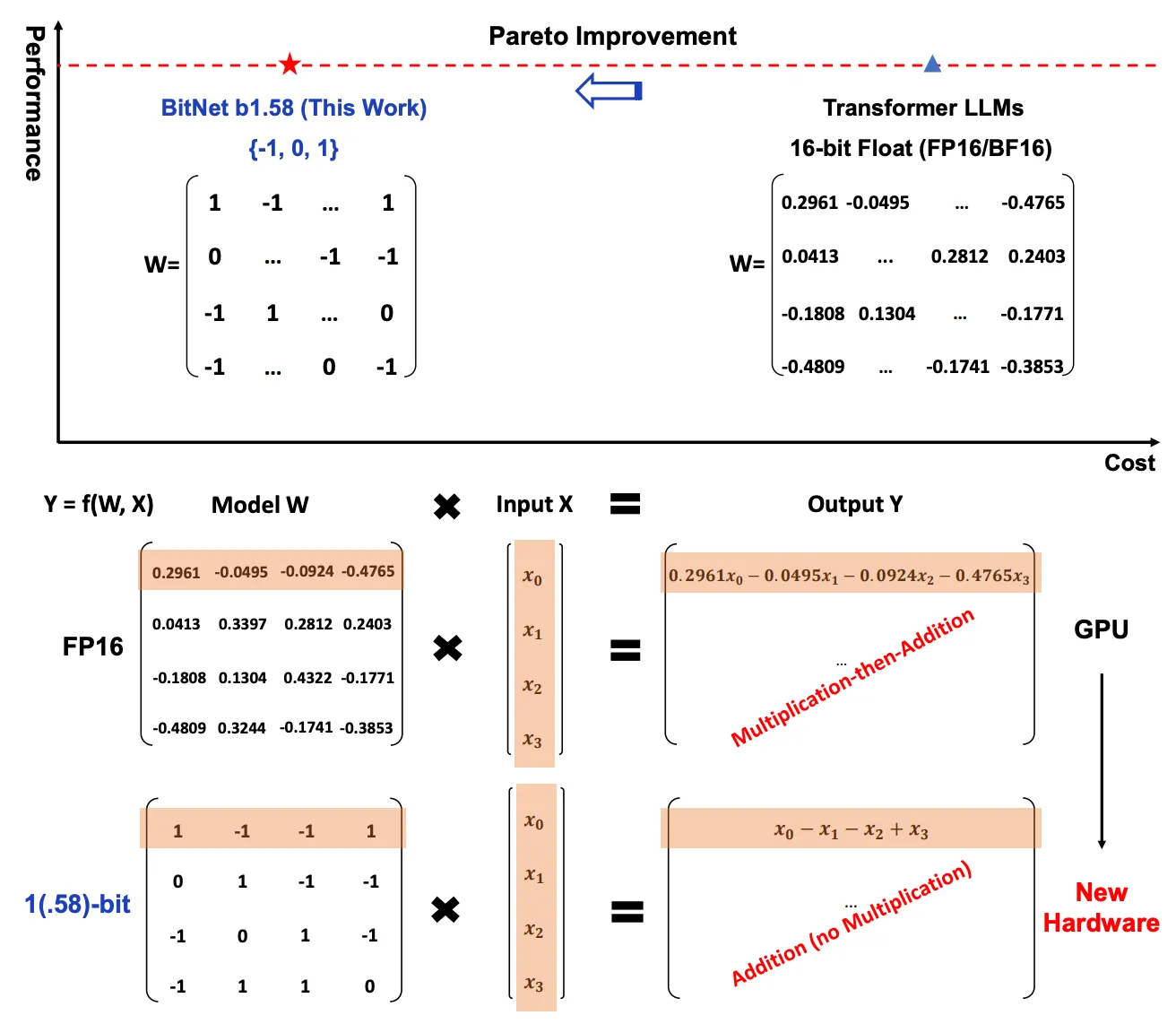

6. Pareto Optimality

bitnet.cpp follows the Pareto precept: small modifications deliver large advantages. You get positive factors in velocity, effectivity, and price with no noticeable loss in efficiency. This stability is good for real-world purposes, the place excellent accuracy isn’t all the time definitely worth the useful resource price.

Now you can deploy giant fashions for real-time purposes like:

-

Digital assistants

-

AI writing instruments

-

Native chatbots

-

Voice-to-text methods

-

Code technology

All while not having large infrastructure.

Efficiency Overview

Right here’s how bitnet.cpp compares to llama.cpp:

| Mannequin Dimension | Tokens/sec (llama.cpp) | Tokens/sec (bitnet.cpp) | Speedup |

| 13B | 1.78 | 10.99 | 6.17x |

| 70B | 0.71 | 1.76 | 2.48x |

And the power effectivity:

These numbers present simply how optimized bitnet.cpp actually is.

How bitnet.cpp Works

The facility of bitnet.cpp comes from its technical basis, particularly the three primary elements:

1. 1-Bit Quantization

This compresses the mannequin weights into 1-bit representations. Usually, weights are saved in 32-bit floating-point numbers. Decreasing them to 1 bit slashes reminiscence utilization and computation wants.

However the magic lies in doing this with out harming the mannequin’s capability to generate correct responses. It’s quick, environment friendly, and surprisingly dependable.

2. Optimized Kernels

bitnet.cpp makes use of optimized kernels to make computation sooner and smarter:

-

I2_S Kernel: Nice for multi-core CPUs. It distributes duties throughout threads effectively.

-

TL1 Kernel: Improves reminiscence entry and lookup velocity.

-

TL2 Kernel: Splendid for units with restricted reminiscence or bandwidth.

These kernels are designed to make the most effective use of your CPU’s structure and capabilities.

3. Broad Mannequin Compatibility

bitnet.cpp works with completely different mannequin sizes and kinds—from small LLaMa fashions to large 100B parameter fashions. This flexibility makes it appropriate for builders in any respect ranges.

How one can Use bitnet.cpp

Right here’s find out how to get began with bitnet.cpp in your machine:

Step 1: Clone the Repository

git clone --recursive https://github.com/microsoft/BitNet.git

cd BitNet

Step 2: Set Up the Setting

Create and activate a Python surroundings:

conda create -n bitnet-cpp python=3.9

conda activate bitnet-cpp

pip set up -r necessities.txt

Step 3: Obtain and Quantize the Mannequin

You’ll want to drag a mannequin from Hugging Face and quantize it utilizing bitnet’s instruments:

python setup_env.py --hf-repo HF1BitLLM/Llama3-8B-1.58-100B-tokens -q i2_s

Step 4: Run Inference

Now you’re prepared to make use of the mannequin:

python run_inference.py -m fashions/Llama3-8B-1.58-100B-tokens/ggml-model-i2_s.gguf -p "Enter your immediate right here."

You’ll get quick responses from the mannequin, all with out utilizing a GPU.

Actual-World Functions

With bitnet.cpp, you may construct highly effective AI instruments on funds {hardware}. Listed below are a number of concepts:

-

AI Writers: Create instruments like WordGPT or Notion AI for content material technology.

-

Non-public Chatbots: Run a neighborhood chatbot with out web connection or server dependency.

-

Instructional Instruments: Let college students discover AI improvement on their very own units.

-

Edge AI: Deploy fashions on IoT or embedded units while not having exterior servers.

-

Price-Reducing AI Apps: Construct scalable AI companies with out burning cash on GPU cloud time.

Way forward for Accessible AI

bitnet.cpp is greater than only a framework. It represents a shift in how we take into consideration AI deployment. As a substitute of counting on cloud giants or costly infrastructure, builders can now deliver AI nearer to the sting—into properties, faculties, and small companies.

That is the democratization of AI in motion.

By making giant fashions gentle and quick, Microsoft’s bitnet.cpp provides everybody the ability to innovate with cutting-edge AI. Whether or not you are an AI hobbyist or a developer constructing the following viral app, bitnet.cpp provides you the instruments to succeed.

What’s Subsequent?

As the sector of AI continues to develop, instruments like bitnet.cpp will cleared the path in making AI extra environment friendly and accessible. Anticipate extra enhancements, broader mannequin help, and neighborhood contributions.

If you wish to discover much more, attempt BotGPT—a customized chatbot builder that allows you to create sensible bots tailor-made to your wants utilizing related know-how. You’ll be able to combine it into your apps, web sites, or enterprise instruments and unlock next-level automation.

Conclusion

bitnet.cpp by Microsoft is a groundbreaking open-source undertaking that helps you run highly effective language fashions on customary CPUs. Due to sensible engineering like 1-bit quantization and optimized kernels, it brings large mannequin efficiency to on a regular basis machines.

Whether or not you are a solo developer, pupil, startup, or enterprise, this instrument can supercharge your AI journey—with out breaking the financial institution.

You might also like

More from Web3

Neuro Salt Under Investigation: Full “Pink Salt Trick” Consumer Report Reveals Shocking Hidden Risks

New York Metropolis, NY, Could 03, 2026 (GLOBE NEWSWIRE) — Neuro Salt has emerged because the complement most prominently …

You Installed Hermes. Now Make It Look Better Than ChatGPT or Claude

Briefly Nous Analysis's Hermes Agent crossed 100,000 GitHub stars in 10 weeks, spawning a fast-growing ecosystem of community-built GUI wrappers. 4 …

Russian Oil Asset Fund Launches Public Website and Solana Token Information Hub for $ROAF

VOLGOGRAD, Russia, Might 03, 2026 (GLOBE NEWSWIRE) — Russian Oil Asset Fund, often known as ROAF, has launched its …