Each millisecond issues. When a consumer hundreds a webpage, advertisers enter a silent public sale that completes in beneath 100 milliseconds. The platform that responds sooner wins the impression. The one which’s late loses income and belief.

That is real-time bidding (RTB), and it is unforgiving.

For AdTech platforms dealing with 100 billion public sale requests every day, the infrastructure selection between naked steel and public cloud is not educational; it straight impacts win charges, income, and competitiveness. As latency-sensitive workloads more and more demand AI-powered inference and real-time mannequin coaching, the case for devoted, optimized infrastructure has turn into inconceivable to disregard.

The RTB Gauntlet: Racing Towards the Clock

Actual-time bidding is one of the most demanding computing challenges within the fashionable digital financial system. Exchanges count on end-to-end responses inside roughly 100 milliseconds, however after accounting for community latency between the trade and your DSP, the precise processing window shrinks to a razor-thin 50–80 milliseconds. In case your system is even 50 milliseconds too sluggish, you lose that impression to a sooner competitor.

The infrastructure supporting this operates at a mind-bending scale. Main advert exchanges course of over 500,000 auctions per second throughout peak hours, with some dealing with upwards of 600 billion bid requests every day. Throughout peak buying seasons and sporting occasions, these volumes explode even additional.

The latency price range allocation reveals why infrastructure selection issues so dramatically:

RTB Latency Price range Allocation: How 100ms Deadline is Distributed

In naked steel environments, that overhead buffer shrinks considerably, leaving extra time for precise clever bidding selections. In cloud VMs, virtualization overhead consumes 30–45% of your accessible processing window, the time you possibly can’t get again.

When firms really measure efficiency head-to-head, the outcomes are placing. Naked steel configurations persistently ship sub-100ms P99 latencies, with common response instances round 12 milliseconds. Cloud VMs usually land within the 120–150ms vary, a 30–40 millisecond hole that is usually the distinction between successful and dropping an public sale.

One blockchain infrastructure supplier ran a direct comparability take a look at beneath similar situations: identical metropolis, identical bandwidth, identical workload. The cloud node began at 28ms, however as soon as actual customers hit the system, latency spiked to 150ms. The naked steel node stayed at a constant 12ms, even beneath full load.

Why does this occur? Virtualization overhead bleeds efficiency throughout each useful resource sort:

Virtualization Overhead by Useful resource Sort: The Hidden Value of Cloud VMs

-

CPU overhead: 9–12% on fashionable hypervisors (Hyper-V), 17% on Intel virtualization, as much as 38% on AMD

-

Reminiscence overhead: ~12% resulting from hypervisor reminiscence administration and per-VM allocations

-

Disk I/O: 6–8% overhead from emulated storage layers

-

Community I/O: 15–25% overhead from digital community interfaces and packet processing

These prices cascade. When processing selections have to be accomplished in milliseconds, each share level of overhead can push you over the latency cliff.

Actual-Time Bidding at Its Core

DSPs (Demand-Facet Platforms) are the engines that energy RTB, working beneath brutal constraints. They have to deal with thousands and thousands of advert requests per second whereas parsing requests, fetching consumer profiles, working advanced pricing algorithms, and producing responses with inventive information and monitoring pixels, all inside 50–80 milliseconds.

A manufacturing RTB system usually allocates 10–20 milliseconds for the precise decision-making section, leaving treasured little margin for error. Analysis on main DSPs exhibits bid response instances common between 5–20 milliseconds relying on concentrating on complexity however degrade by as much as 35% throughout peak site visitors durations in under-optimized methods. One main Chinese language DSP diminished common response instances from 23ms to 9ms purely via algorithmic enhancements with none {hardware} improve.

Constructing the Proper Infrastructure for Every AdTech Element

Minimal {Hardware} Specs for AdTech Platform Elements

Demand-Facet Platforms (DSPs) want high-frequency, multi-core processors optimized for uncooked velocity: 24–64 high-frequency cores (favoring clock velocity over core depend), 256–512GB RAM for sustaining consumer profiles and marketing campaign information in reminiscence, 10–25 GbE minimal community bandwidth, and 1–2TB NVMe storage for heat information and telemetry.

Provide-Facet Platforms (SSPs) handle billions of impressions every day and prioritize throughput and concurrency: 24–48 cores with sturdy per-core efficiency, 256GB to 1TB RAM for writer stock catalogs and yield optimization, 25–100 GbE for dealing with thousands and thousands of concurrent bid requests, and hybrid storage approaches with quick SSDs for lively stock and enormous HDDs for historic information.

Knowledge Administration Platforms (DMPs) emphasize information processing and bulk analytics: 12–24 cores with assist for superior instruction units (AVX-512), 256GB to 2TB RAM relying on dataset measurement and segmentation wants, 10–40 GbE community bandwidth the place throughput issues greater than ultra-low latency, and big tiered capability with NVMe for lively segments and 100TB+ HDD arrays for historic information.

Advert Exchanges orchestrate auctions in real-time and demand absolute consistency: 32–64 cores on the highest accessible clock speeds (doubtlessly throughout single-socket servers to reduce NUMA results), 512GB to 2TB RAM for sustaining state on thousands and thousands of concurrent auctions, 25/50/100 GbE+ with direct peering to main DSPs and SSPs, and all-flash storage for operational information with hottest keys in reminiscence.

The Optimization Breakthrough: From 29ms to 5ms

Actual-time bidding has skilled dramatic efficiency enhancements via infrastructure optimization and architectural innovation. Analysis demonstrates that transferring from conventional architectures to optimized pipelined processing can scale back common latency from 29 milliseconds down to simply 8 milliseconds, a 72% enchancment. When mixed with Kafka KIP-500 structure for distributed stream processing, methods can obtain sub-5 millisecond latencies.

RTB Processing Optimization Enhancements: Path to Sub-10ms Latency

Key optimization positive aspects embrace:

-

Pipelined processing architectures: 72% latency discount (29ms → 8ms)

-

Kafka KIP-500 structure: 83% latency discount, with infrastructure prices dropping 44% whereas throughput capability will increase 2.7x

-

Multi-level caching methods: 65–80% discount in information entry instances, with 47% decrease common bid processing instances throughout high-traffic durations via sensible prefetching algorithms, attaining 76.3% cache hit charges

These optimizations compound when working on naked steel infrastructure with out virtualization overhead stealing cycles.

The “Noisy Neighbor” Tax: Why Shared Sources Fail AdTech

Cloud environments introduce a hidden efficiency killer: the “noisy neighbor” impact. When different tenants in your shared bodily host eat I/O bandwidth, community capability, or CPU cycles, your digital machine suffers collateral injury.

A single VM doing heavy database backups can saturate the I/O bandwidth of the complete shared storage array, forcing all different VMs to attend. A neighbor working aggressive community operations can saturate the bodily NIC, inflicting packet loss and elevated latency for everybody else.

For AdTech, that is catastrophic. When milliseconds decide winners and losers, you possibly can’t afford efficiency variability attributable to unknown workloads working on the identical bodily {hardware}. Naked steel eliminates this downside solely; your servers do not share sources with rivals, and your efficiency stays constant, predictable, and repeatable.

Actual-World Influence: The Numbers That Matter

Corporations making the swap to devoted infrastructure report gorgeous effectivity positive aspects. spheron.ai clients report as much as 86% decrease compute prices and three× higher system efficiency in contrast with virtualized deployments. Neon Labs achieved real-time response targets whereas chopping cloud prices by 60%. One optimization case examine (PowerLinks) diminished infrastructure spending from $200,000/month to $10,000/month, a 20× enchancment, with out sacrificing efficiency.

The Trade Desk, one of many largest DSPs globally, spent $264 million on platform operations through the first 9 months of 2023, roughly $730,000 per day on infrastructure alone. This demonstrates the size at which severe AdTech platforms function. That sort of scale means infrastructure selections compound: a 5% efficiency enchancment throughout thousands and thousands of every day auctions interprets to thousands and thousands in recovered income, whereas a 20% value discount straight improves margins.

For small, early-stage AdTech platforms, the cloud affords velocity and adaptability; you possibly can spin up capability immediately with out {hardware} lead instances, and also you solely pay for what you employ. This works in yr one.

However beginning in yr two, the economics flip. Naked steel infrastructure, with its increased upfront prices, amortizes throughout steady, predictable workloads. By yr three, the full value of possession benefit turns into simple. By yr 5, naked steel can ship 20–50% value financial savings.

The crossover level usually happens when site visitors stabilizes (not continuously spiking). You possibly can predict useful resource utilization 3–6 months ahead, your DSP/SSP platform handles thousands and thousands of every day impressions, and efficiency consistency issues greater than elastic scaling. For severe AdTech operations, this threshold arrives rapidly.

The Hidden Prices of Virtualization

Cloud adoption creates upstream complexity and value that hardly ever seem on preliminary payments. The virtualization stack itself, hypervisor software program licensing, administration platforms (vCenter, Kubernetes management planes), and monitoring methods, add up quick. Enterprise-grade virtualized environments require subtle useful resource administration and lively optimization to keep away from losing capability.

Cloud groups should over-provision sources to buffer towards the unpredictable efficiency degradation of noisy neighbors. This “insurance coverage value” will get baked into month-to-month payments as unused capability sitting idle to deal with worst-case situations. Moreover, abilities required to debug efficiency points in virtualized environments are specialised and costly. When latency issues emerge, distinguishing between utility bugs, virtualization overhead, and noisy neighbor results requires experience that may take weeks to develop.

Constructing the Proper Hybrid Technique

Subtle AdTech firms do not deal with this as either-or. As a substitute, they construct hybrid methods:

On Naked Metallic (Latency-Essential Scorching Paths):

-

Actual-time bidding resolution engines

-

Person profile cache and have serving

-

Advert trade transaction processing

-

On-host monitoring and profiling instruments

-

Low-latency community infrastructure

On Cloud (Versatile, Burst Workloads):

-

ML mannequin coaching on giant datasets

-

Knowledge warehouse and analytics pipelines

-

CI/CD pipelines and testing infrastructure

-

Batch reporting and aggregation jobs

-

Growth and staging environments

This strategy offers you the uncooked efficiency and deterministic latency the place milliseconds resolve outcomes, plus the pliability and scale of the cloud, the place absolute velocity is not the first constraint.

The Future Calls for Decisiveness

Trying forward, AdTech infrastructure faces mounting stress. Generative inventive optimization and dynamic model-driven bidding have gotten mainstream, making GPU compute and low-latency inference pipelines foundational quite than elective. International power costs stay risky resulting from geopolitical tensions, and since AdTech should keep velocity and scale 24/7, in contrast to industries that may dial again operations, the effectivity of naked steel turns into more and more engaging as cloud power prices get handed to clients.

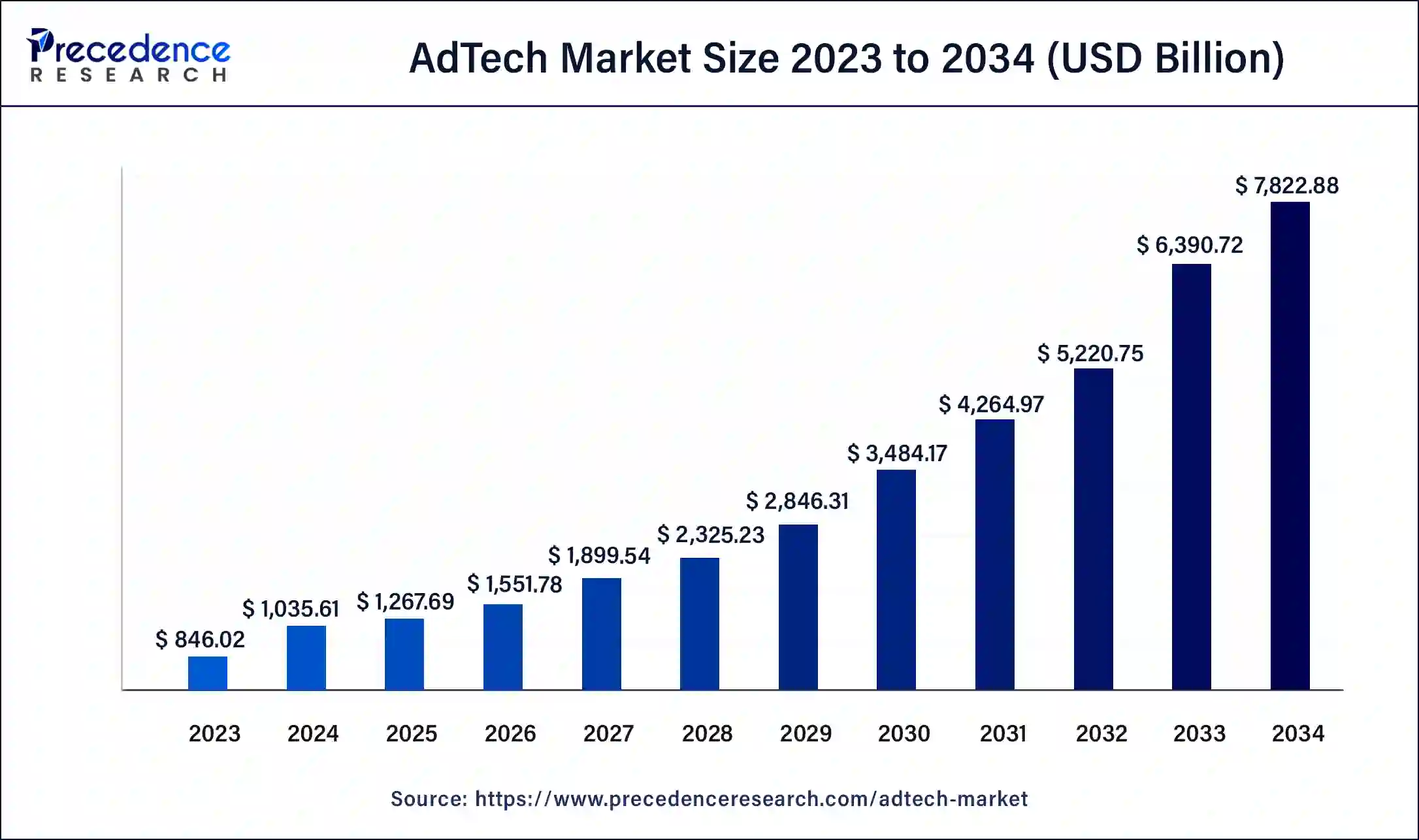

With cookie deprecation and privateness rules tightening, platforms are transferring to first-party identification options and real-time information consolidation, requiring infrastructure that provides full management and isolation, one thing naked steel offers natively. The worldwide AdTech market is projected to develop from $1.04 trillion in 2024 to $7.82 trillion by 2034 at a 22.35% CAGR, explosive development that can require infrastructure scaling effectively, the place naked steel wins on each efficiency and unit economics at scale.

The Determination Level: Velocity Versus Flexibility

The elemental trade-off in infrastructure selection comes all the way down to: Do you optimize for velocity or for flexibility? For early-stage platforms nonetheless discovering product-market match, cloud is smart. You possibly can pivot, experiment, and scale elastically, and overhead prices are acceptable when infrastructure bills are modest.

However for platforms which have stabilized and grown, function at predictable scale, and the place latency straight impacts income, the choice needs to be clear: naked steel is the superior selection.

This is why:

Predictable Efficiency: No virtualization overhead means constant response instances. Your win price does not rely on what another buyer is doing on the identical bodily {hardware}.

Customization and Management: Kernel tuning, community stack optimization, specialised monitoring instruments, and customized safety configurations all turn into attainable. You possibly can optimize on your precise workload, not a generic VM template.

Highly effective {Hardware}: Fashionable devoted servers ship with high-frequency CPUs, huge RAM (usually 256GB–1TB+), NVMe SSD storage, and 10/25/50/100 GbE networking, {hardware} purpose-built for the calls for of real-time bidding.

Lengthy-Time period Economics: For steady workloads, naked steel delivers decrease per-transaction prices and higher predictable budgeting than month-to-month cloud payments that escalate with scale.

Aggressive Benefit: In a enterprise measured in milliseconds, a 30–50 millisecond latency benefit interprets to extra wins, increased income, and extra market share. The rivals who invested in the proper infrastructure first will keep that edge.

The Name: Make investments Now or Remorse Later

The AdTech business does not reward complacency. Platforms that made the infrastructure funding 5 years in the past at the moment are working at 2–3× the effectivity of these nonetheless counting on the cloud. The businesses making the proper selection right this moment would be the market leaders of tomorrow.

Naked steel infrastructure is not a classy technical element; it is the foundational technique that separates successful platforms from commodity gamers in a enterprise the place each millisecond interprets to income.

For any AdTech platform severe about scale, predictability, and efficiency, naked steel infrastructure is not simply higher. It is important.

You might also like

More from Web3

Virtual Private Network (VPN) Solutions Market Is Booming Rapidly with Strong Demand | NordVPN • ExpressVPN • CyberGhost • Surfshark

Digital Non-public Community (Vpn) Options Market Evaluation Coherent Market Insights’ most up-to-date analysis examine, “World Digital Non-public Community (VPN) …

Minors Sue xAI in California Over Alleged Grok Deepfake Images

In short Three Tennessee minors have sued xAI, alleging Grok generated CSAM from their actual images and unfold it on-line, …

Stimulus Broadband Breaks Ground on Klamath County Fiber Build

Stimulus Broadband Celebrates Bonanza Fiber Web Groundbreaking, Launching BDP-Funded Construct to Broaden Dependable Connectivity in Rural Klamath CountyKLAMATH FALLS, …