>>>> gd2md-html alert: inline picture hyperlink in generated supply and retailer pictures to your server. NOTE: Photographs in exported zip file from Google Docs could not seem in the identical order as they do in your doc. Please test the photographs!

—–>

In case you’re not a developer, then why on the earth would you wish to run an open-source AI mannequin on your property pc?

It seems there are a variety of excellent causes. And with free, open-source fashions getting higher than ever—and easy to make use of, with minimal {hardware} necessities—now is a superb time to present it a shot.

Listed below are a number of explanation why open-source fashions are higher than paying $20 a month to ChatGPT, Perplexity, or Google:

- It’s free. No subscription charges.

- Your knowledge stays in your machine.

- It really works offline, no web required.

- You possibly can practice and customise your mannequin for particular use circumstances, similar to artistic writing or… nicely, something.

The barrier to entry has collapsed. Now there are specialised packages that allow customers experiment with AI with out all the trouble of putting in libraries, dependencies, and plugins independently. Nearly anybody with a reasonably current pc can do it: A mid-range laptop computer or desktop with 8GB of video reminiscence can run surprisingly succesful fashions, and a few fashions run on 6GB and even 4GB of VRAM. And for Apple, any M-series chip (from the previous few years) will be capable of run optimized fashions.

The software program is free, the setup takes minutes, and essentially the most intimidating step—selecting which device to make use of—comes right down to a easy query: Do you like clicking buttons or typing instructions?

LM Studio vs. Ollama

Two platforms dominate the native AI area, they usually strategy the issue from reverse angles.

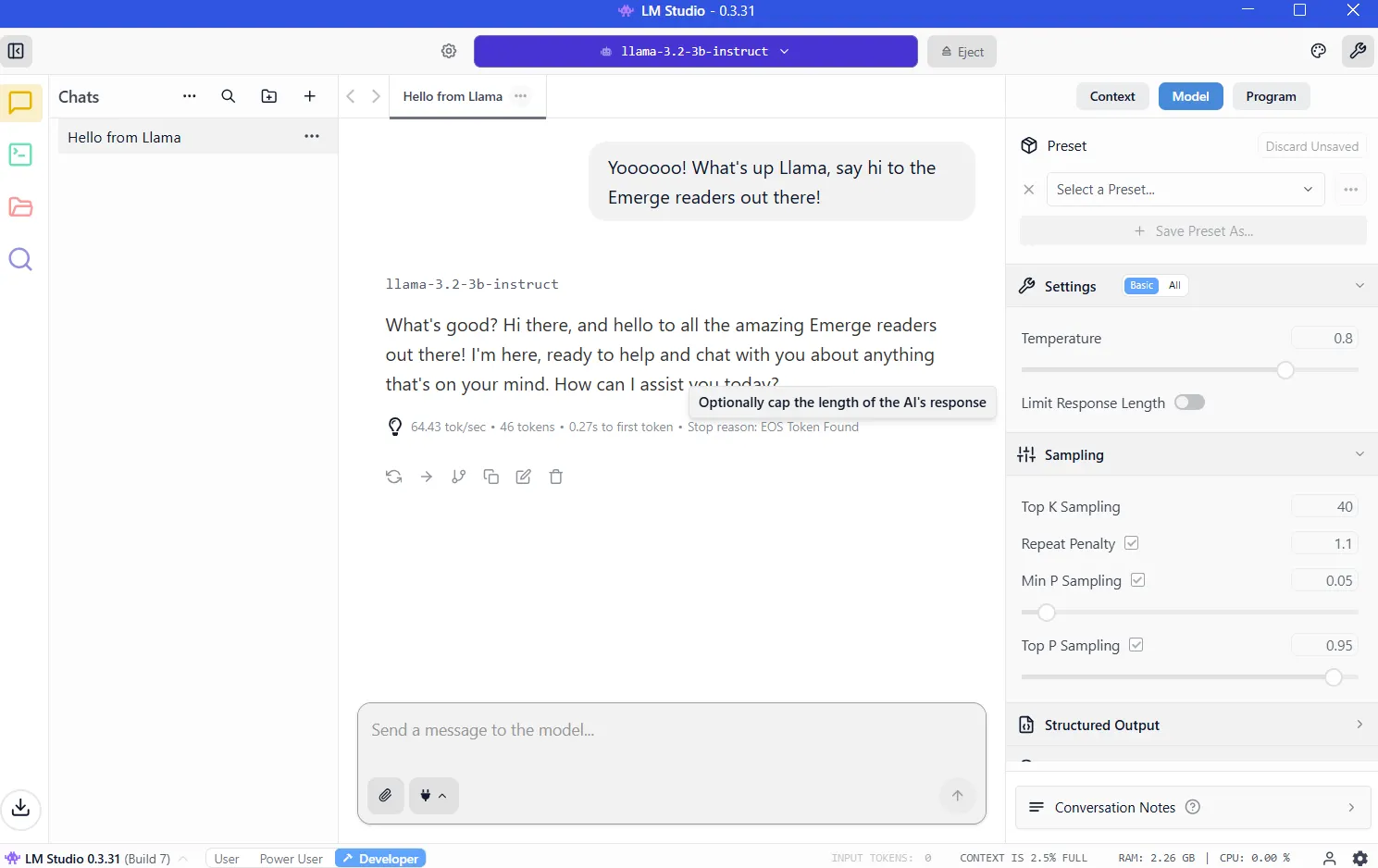

LM Studio wraps every thing in a cultured graphical interface. You possibly can merely obtain the app, browse a built-in mannequin library, click on to put in, and begin chatting. The expertise mirrors utilizing ChatGPT, besides the processing occurs in your {hardware}. Home windows, Mac, and Linux customers get the identical clean expertise. For newcomers, that is the plain place to begin.

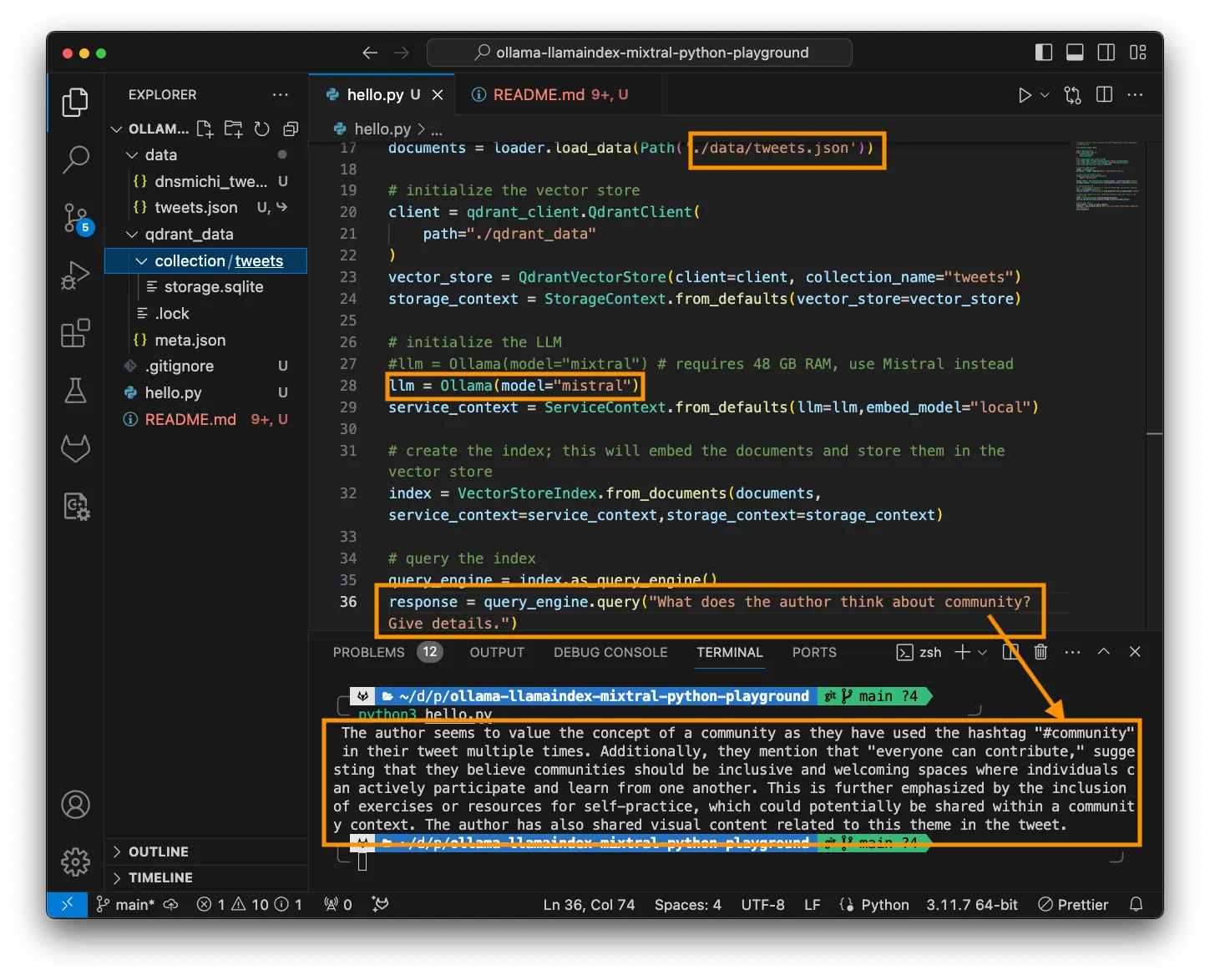

Ollama is geared toward builders and energy customers who stay within the terminal. Set up by way of command line, pull fashions with a single command, after which script or automate to your coronary heart’s content material. It is light-weight, quick, and integrates cleanly into programming workflows.

The training curve is steeper, however the payoff is flexibility. Additionally it is what energy customers select for versatility and customizability.

Each instruments run the identical underlying fashions utilizing similar optimization engines. Efficiency variations are negligible.

Organising LM Studio

Go to https://lmstudio.ai/ and obtain the installer on your working system. The file weighs about 540MB. Run the installer and observe the prompts. Launch the appliance.

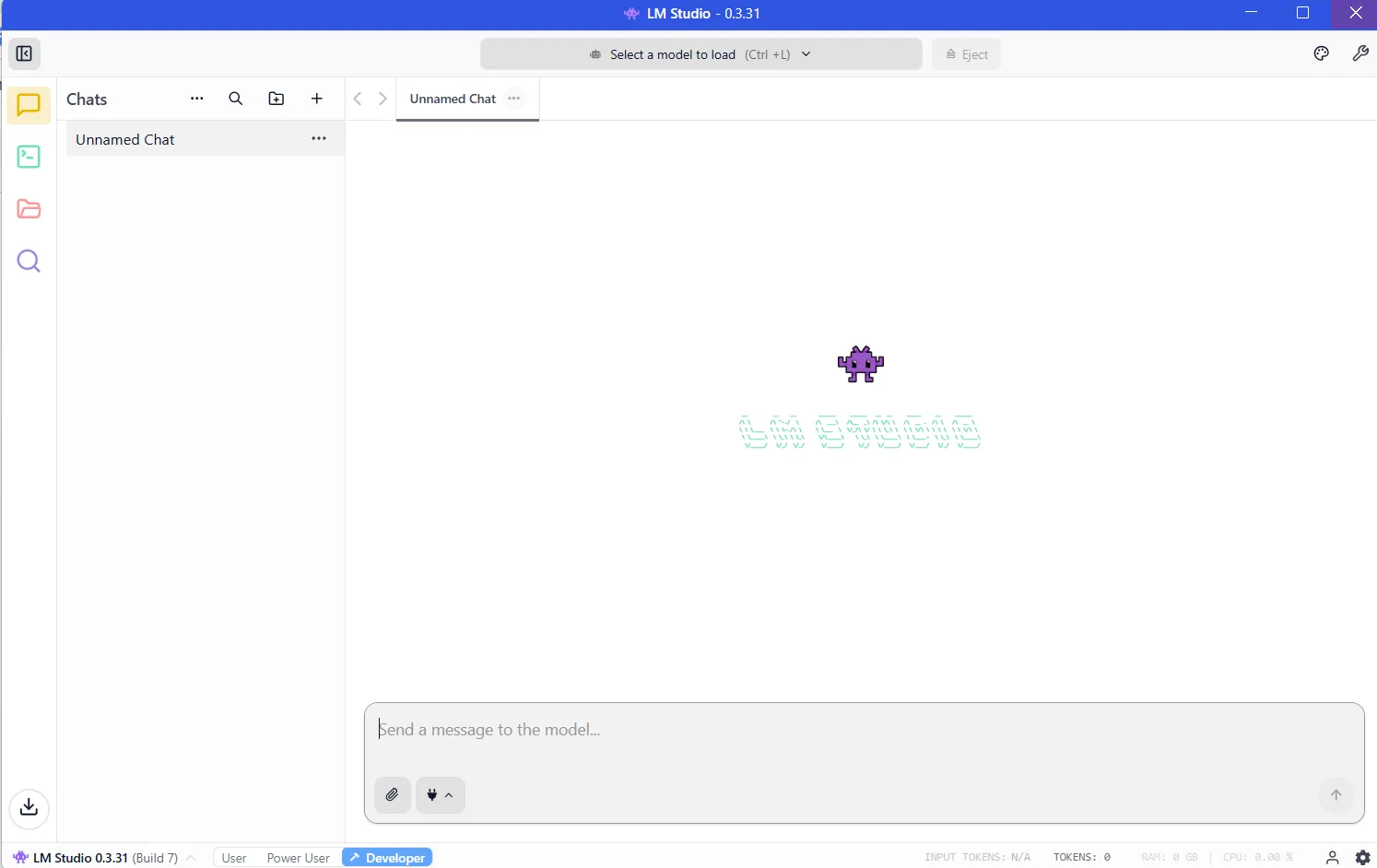

Trace 1: If it asks you which sort of consumer you’re, choose “developer.” The opposite profiles merely disguise choices to make issues simpler.

Trace 2: It can suggest downloading OSS, OpenAI’s open-source AI mannequin. As an alternative, click on “skip” for now; there are higher, smaller fashions that can do a greater job.

VRAM: The important thing to working native AI

Upon getting put in LM Studio, this system shall be able to run and can seem like this:

Now you might want to obtain a mannequin earlier than your LLM will work. And the extra highly effective the mannequin, the extra assets it should require.

The important useful resource is VRAM, or video reminiscence in your graphics card. LLMs load into VRAM throughout inference. If you do not have sufficient area, then efficiency collapses and the system should resort to slower system RAM. You will wish to keep away from that by having sufficient VRAM for the mannequin you wish to run.

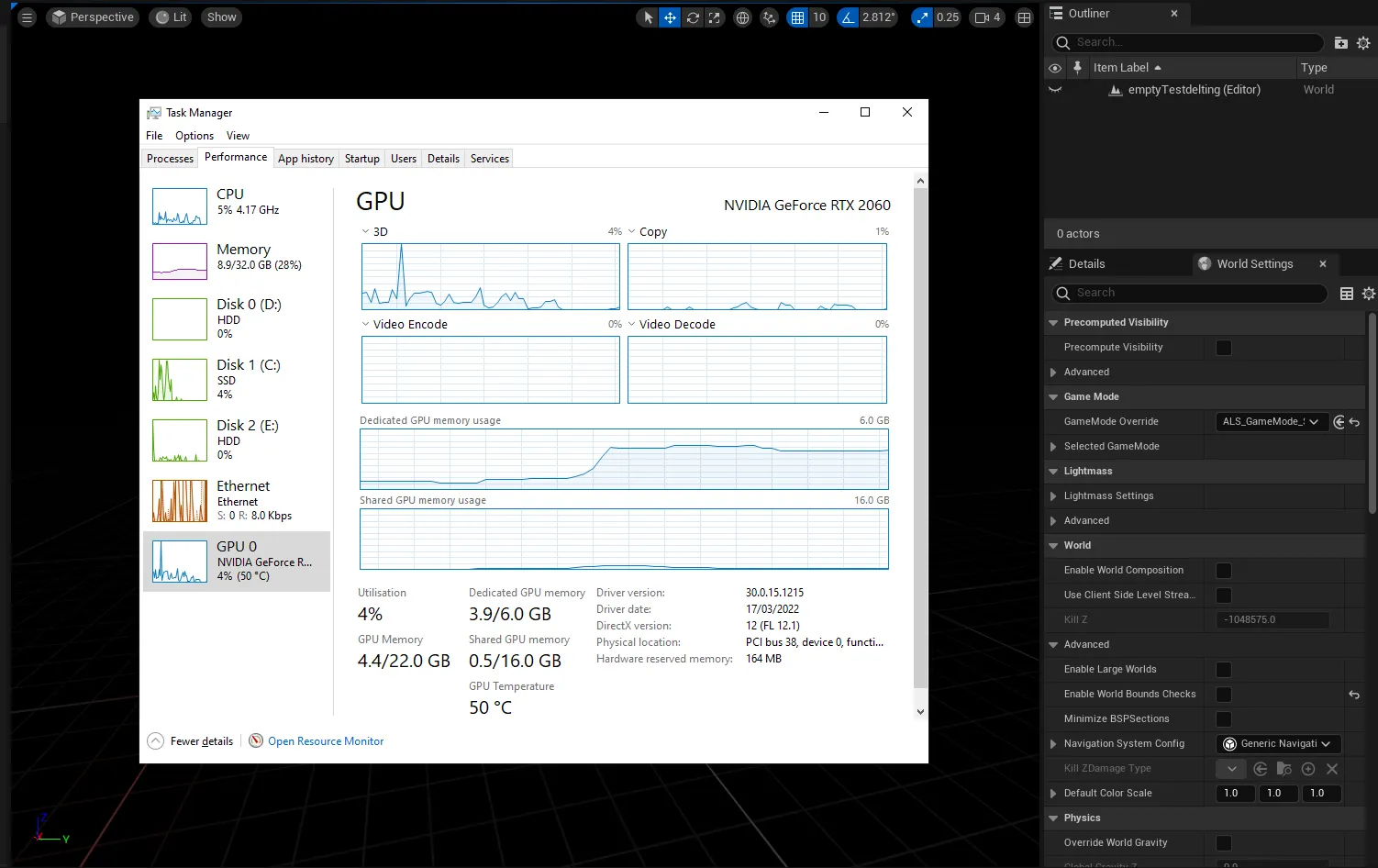

To know the way a lot VRAM you may have, you possibly can enter the Home windows activity supervisor (management+alt+del) and click on on the GPU tab, ensuring you may have chosen the devoted graphics card and never the built-in graphics in your Intel/AMD processor.

You will notice how a lot VRAM you may have within the “Devoted GPU reminiscence” part.

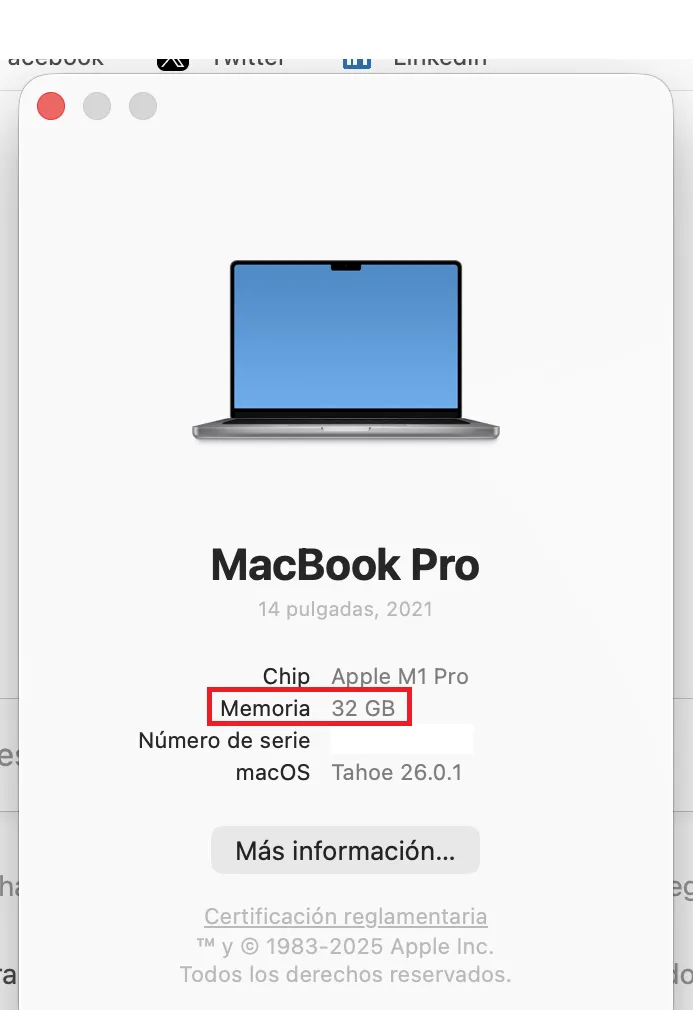

On M-Collection Macs, issues are simpler since they share RAM and VRAM. The quantity of RAM in your machine will equal the VRAM you possibly can entry.

To test, click on on the Apple brand, then click on on “About.” See Reminiscence? That is how a lot VRAM you may have.

You’ll need at the very least 8GB of VRAM. Fashions within the 7-9 billion parameter vary, compressed utilizing 4-bit quantization, match comfortably whereas delivering robust efficiency. You’ll know if a mannequin is quantized as a result of builders normally disclose it within the identify. In case you see BF, FP or GGUF within the identify, then you’re looking at a quantized mannequin. The decrease the quantity (FP32, FP16, FP8, FP4), the less assets it should devour.

It’s not apples to apples, however think about quantization because the decision of your display screen. You will notice the identical picture in 8K, 4K, 1080p, or 720p. It is possible for you to to understand every thing regardless of the decision, however zooming in and being choosy on the particulars will reveal {that a} 4K picture has extra data {that a} 720p, however would require extra reminiscence and assets to render.

However ideally, in case you are actually severe, then you should purchase a pleasant gaming GPU with 24GB of VRAM. It doesn’t matter whether it is new or not, and it doesn’t matter how briskly or highly effective it’s. Within the land of AI, VRAM is king.

As soon as you know the way a lot VRAM you possibly can faucet, then you possibly can work out which fashions you possibly can run by going to the VRAM Calculator. Or, merely begin with smaller fashions of lower than 4 billion parameters after which step as much as greater ones till your pc tells you that you simply don’t have sufficient reminiscence. (Extra on this method in a bit.)

Downloading your fashions

As soon as your {hardware}’s limits, then it is time to obtain a mannequin. Click on on the magnifying glass icon on the left sidebar and seek for the mannequin by identify.

Qwen and DeepSeek are good fashions to make use of to start your journey. Sure, they’re Chinese language, however in case you are nervous about being spied on, then you possibly can relaxation straightforward. Whenever you run your LLM regionally, nothing leaves your machine, so that you gained’t be spied on by both the Chinese language, the U.S. authorities, or any company entities.

As for viruses, every thing we’re recommending comes by way of Hugging Face, the place software program is immediately checked for adware and different malware. However for what it is price, the very best American mannequin is Meta’s Llama, so it’s possible you’ll wish to choose that in case you are a patriot. (We provide different suggestions within the remaining part.)

Observe that fashions do behave in a different way relying on the coaching dataset and the fine-tuning strategies used to construct them. Elon Musk’s Grok however, there isn’t a such a factor as an unbiased mannequin as a result of there isn’t a such factor as unbiased data. So choose your poison relying on how a lot you care about geopolitics.

For now, obtain each the 3B (smaller much less succesful mannequin) and 7B variations. In case you can run the 7B, then delete the 3B (and check out downloading and working the 13B model and so forth). In case you can not run the 7B model, then delete it and use the 3B model.

As soon as downloaded, load the mannequin from the My Fashions part. The chat interface seems. Kind a message. The mannequin responds. Congratulations: You are working an area AI.

Giving your mannequin web entry

Out of the field, native fashions cannot browse the online. They’re remoted by design, so you’ll iterate with them primarily based on their inner data. They may work superb for writing quick tales, answering questions, doing a little coding, and so forth. However they gained’t provide the newest information, let you know the climate, test your e-mail, or schedule conferences for you.

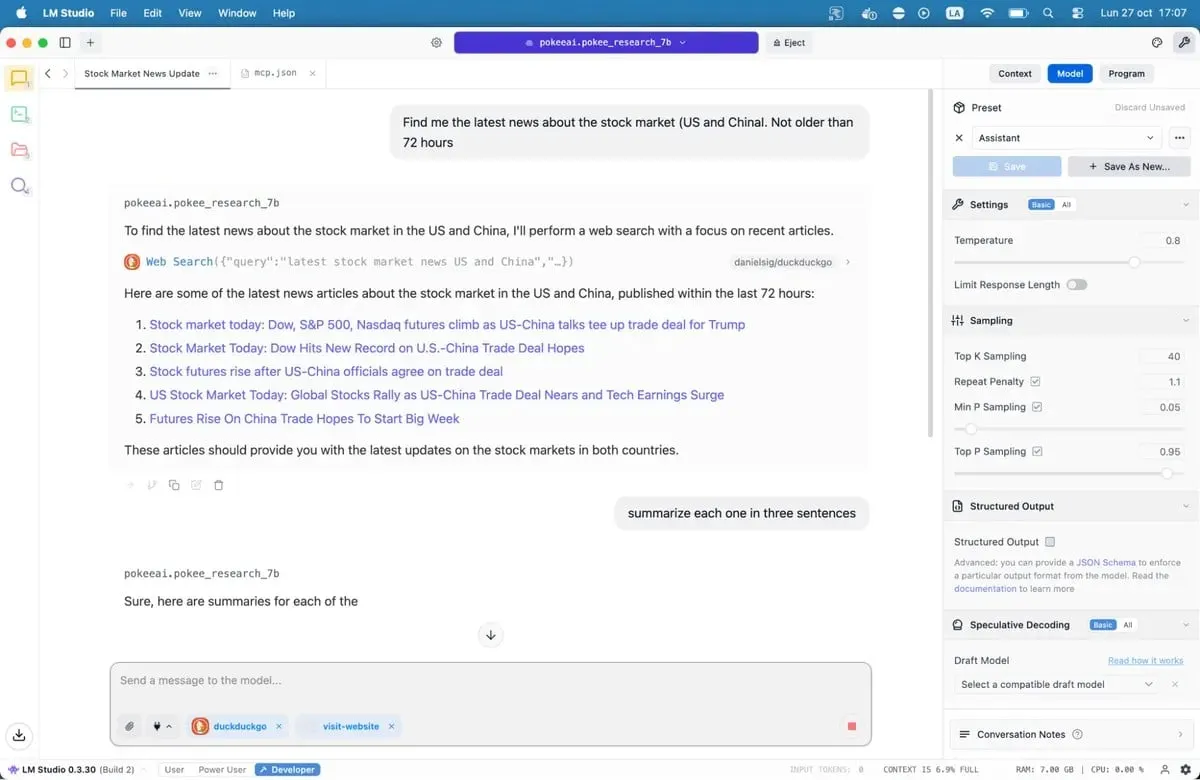

Model Context Protocol servers change this.

MCP servers act as bridges between your mannequin and exterior companies. Need your AI to look Google, test GitHub repositories, or learn web sites? MCP servers make it doable. LM Studio added MCP help in model 0.3.17, accessible by the Program tab. Every server exposes particular instruments—internet search, file entry, API calls.

If you wish to give fashions entry to the web, then our complete guide to MCP servers walks by the setup course of, together with well-liked choices like internet search and database entry.

Save the file and LM Studio will mechanically load the servers. Whenever you chat together with your mannequin, it could now name these instruments to fetch stay knowledge. Your native AI simply gained superpowers.

Our beneficial fashions for 8GB programs

There are actually tons of of LLMs accessible for you, from jack-of-all-trades choices to fine-tuned fashions designed for specialised use circumstances like coding, medication, position play or artistic writing.

Greatest for coding: Nemotron or DeepSeek are good. They gained’t blow your thoughts, however will work superb with code technology and debugging, outperforming most alternate options in programming benchmarks. DeepSeek-Coder-V2 6.7B provides one other stable choice, significantly for multilingual growth.

Greatest for common data and reasoning: Qwen3 8B. The mannequin has robust mathematical capabilities and handles complicated queries successfully. Its context window accommodates longer paperwork with out shedding coherence.

Greatest for artistic writing: DeepSeek R1 variants, however you want some heavy immediate engineering. There are additionally uncensored fine-tunes just like the “abliterated-uncensored-NEO-Imatrix” version of OpenAI’s GPT-OSS, which is nice for horror; or Dirty-Muse-Writer, which is nice for erotica (so they are saying).

Greatest for chatbots, role-playing, interactive fiction, customer support: Mistral 7B (particularly Undi95 DPO Mistral 7B) and Llama variants with massive context home windows. MythoMax L2 13B maintains character traits throughout lengthy conversations and adapts tone naturally. For different NSFW role-play, there are lots of choices. You could wish to test a number of the fashions on this list.

For MCP: Jan-v1-4b and Pokee Analysis 7b are good fashions if you wish to strive one thing new. DeepSeek R1 is one other good choice.

All the fashions may be downloaded instantly from LM Studio in case you simply seek for their names.

Observe that the open-source LLM panorama is shifting quick. New fashions launch weekly, every claiming enhancements. You possibly can test them out in LM Studio, or flick thru the completely different repositories on Hugging Face. Take a look at choices out for your self. Unhealthy matches turn into apparent shortly, due to awkward phrasing, repetitive patterns, and factual errors. Good fashions really feel completely different. They purpose. They shock you.

The expertise works. The software program is prepared. Your pc in all probability already has sufficient energy. All that is left is attempting it.

Usually Clever Publication

A weekly AI journey narrated by Gen, a generative AI mannequin.

You might also like

More from Web3

Turkey Soft Skills Training Market Outlook: Trends, Growth, and Future Opportunities 2026-2034

The Turkey Gentle Abilities Coaching Market was valued at USD 326.24 Million in 2025 and is projected to …

Democrats Press Meta Over Facial Recognition Plans for Smart Glasses

In short Democratic senators are warning real-time facial identification may expose people to stalking, harassment, and focused intimidation. Prior reporting this …

Industrial Furnace Market to Surge to USD 17.01 Billion by 2031 Driven by Steel, Automotive, and Manufacturing Demand

Industrial Furnace Market Mordor Intelligence has printed a brand new report on the Industrial furnace market, providing a complete …