When you’ve got ever tried working a big language mannequin regionally by yourself laptop, you already know the quick, painful actuality: these items will aggressively devour each single gigabyte of RAM you personal. I’ve spent numerous hours attempting to optimize native AI setups, and the reminiscence bottleneck is at all times the wall you hit.

However once I was studying via Google’s newest analysis weblog this morning, I truly sat up in my chair. Google has simply quietly introduced a brand new compression know-how known as TurboQuant, and it essentially modifications the mathematics on how synthetic intelligence consumes {hardware}.

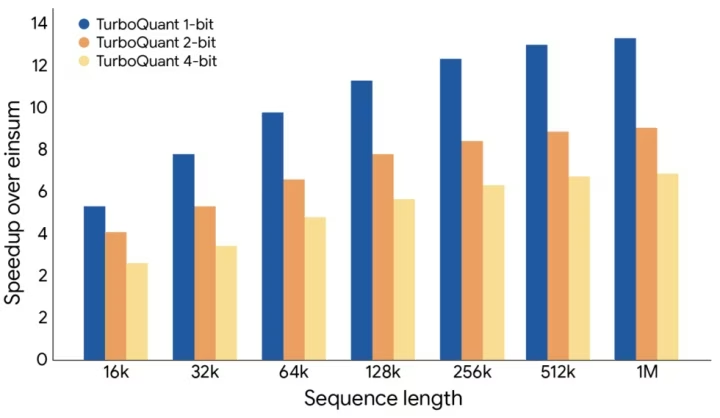

We’re speaking about 6 instances much less reminiscence utilization and 8 instances sooner processing speeds—all with out making the AI “dumber.” I hate throwing across the phrase “revolution,” however that is precisely the sort of breakthrough we have to take AI out of huge, costly server farms and put it instantly into our pockets. Let’s break down precisely what Google simply pulled off.

The Bottleneck We’ve All Been Ignoring: The KV Cache

To know why TurboQuant is such a giant deal, now we have to speak about how AI truly “remembers” your dialog.

Massive Language Fashions (LLMs) don’t learn phrases like we do; they convert ideas into high-dimensional vectors (huge strings of numbers). To keep away from recalculating the which means of each phrase each single time you ask a follow-up query, the AI makes use of one thing known as a Key-Worth (KV) cache. Consider the KV cache because the AI’s digital cheat sheet.

Right here is the issue:

- As your dialog will get longer, that cheat sheet will get huge.

- These vectors comprise a whole lot or hundreds of parameters.

- Storing them requires a ridiculous quantity of high-speed reminiscence.

Traditionally, builders have tried to repair this utilizing a technique known as quantization—which mainly means squeezing the information right into a decrease decision. It saves area, however the nasty aspect impact is that the AI begins hallucinating or giving lower-quality solutions. It was at all times a pressured compromise. Till now.

Enter TurboQuant: How Google Did the Unattainable

In response to their preliminary checks, Google’s TurboQuant utterly bypasses this compromise. It shrinks the mannequin’s reminiscence footprint dramatically with out degrading the standard of its output.

How did they do it? They break up the compression course of into two extremely intelligent steps.

Step 1: PolarQuant and the Geometry of Language

The primary section is a system they name PolarQuant, and the logic behind it’s good.

Usually, AI vectors are plotted utilizing customary Cartesian (XYZ) coordinates. However PolarQuant takes these heavy, advanced coordinates and interprets them into polar coordinates. As a substitute of monitoring a large grid, each vector is abruptly represented by simply two easy items of data:

- Radius: The power or magnitude of the information.

- Angle: The semantic course (the precise which means) of the information.

Google used an ideal analogy for this: Conventional XYZ mapping is like telling somebody, “Stroll 3 blocks East, then 4 blocks North.” PolarQuant modifications the instruction to, “Flip 37 levels and stroll 5 blocks.” It’s a shorter, cleaner, and vastly extra environment friendly method to retailer the very same vacation spot.

Step 2: The “QJL” Security Internet

In fact, aggressively compressing knowledge like this often creates slight deviations or “glitches” within the AI’s understanding. To repair this, Google applied a second layer known as the Quantized Johnson-Lindenstrauss (QJL) methodology.

Don’t let the advanced identify intimidate you. Basically, QJL acts as a microscopic error-correction layer. It makes use of only a single bit (+1 or -1) to symbolize and tweak the vectors, guaranteeing that the vital semantic relationships between phrases aren’t misplaced within the compression. It makes positive the AI’s “consideration” mechanism stays dead-on correct.

Actual-World Testing: No Retraining Required

Ideas are nice, however the precise benchmark numbers are what actually blew my thoughts. Google didn’t simply take a look at this in a vacuum; they ran TurboQuant on well-liked open-weight fashions like Gemma and Mistral.

Listed here are the exhausting information from their checks:

- 6x Reminiscence Discount: The KV cache reminiscence requirement dropped by an element of six.

- 3-Bit Compression: It may possibly compress the cache down to simply 3 bits per parameter.

- Zero Retraining: That is big for builders. You’ll be able to apply TurboQuant to current fashions with out having to spend hundreds of thousands of {dollars} retraining them from scratch.

- Blazing Velocity: When examined on an Nvidia H100 GPU, the 4-bit TurboQuant carried out consideration calculations 8 instances sooner than conventional 32-bit uncompressed keys.

Why I Assume That is the Key to Cell AI

Whereas it’s simple to have a look at this and take into consideration how a lot cash cloud suppliers will save on server prices, I have a look at TurboQuant and see the way forward for the smartphone.

Proper now, to get ChatGPT-level intelligence, your telephone has to ship your knowledge to a cloud server, look ahead to the large computer systems to do the considering, and ping the reply again to you. It requires an web connection, it drains battery, and it poses huge privateness issues.

With algorithms like TurboQuant, the {hardware} limitations of cell gadgets abruptly don’t look so intimidating. If we are able to compress the reminiscence footprint by 6x and pace up the processing by 8x, working a hyper-intelligent, totally non-public AI natively in your smartphone isn’t a pipe dream anymore. It’s imminent.

I truthfully consider we’re transferring towards a world the place probably the most highly effective AI isn’t sitting in a knowledge heart, however resting proper in your pocket.

So, I’m curious to listen to your tackle this. If algorithms like TurboQuant make it attainable to run extremely good AI solely offline in your smartphone, would you lastly ditch the cloud-based apps for the sake of whole privateness? Let me know your ideas down beneath!